Moving target detection based on improved ghost suppression and adaptive visual background extraction

来源期刊:中南大学学报(英文版)2021年第3期

论文作者:瞿中 刘玲 柴国华

文章页码:747 - 759

Key words:moving target detection; ghost suppression; adaptive visual background extraction

Abstract: Visual background extraction algorithm (ViBe) uses the first frame image to initialize the background model, which can easily introduce the “ghost”. Because ViBe uses the fixed segmentation threshold to achieve the foreground and background segmentation, the detection results in many false detections for the highly dynamic background. To solve these problems, an improved ghost suppression and adaptive Visual Background Extraction algorithm is proposed in this paper. Firstly, with the pixel’s temporal and spatial information, the historical pixels of a certain combination are used to initialize the background model in the odd frames of the video sequence. Secondly, the background sample set combined with the neighborhood pixels are used to determine a complex degree of the background, to acquire the adaptive segmentation threshold. Thirdly, the update rate is adjusted based on the complexity of the background. Finally, the detected result goes through a post-processing to achieve better detection results. The experimental results show that the improved algorithm will not only quickly suppress the “ghost”, but also have a better detection in a complex dynamic background.

Cite this article as: LIU Ling, CHAI Guo-hua, QU Zhong. Moving target detection based on improved ghost suppression and adaptive visual background extraction [J]. Journal of Central South University, 2021, 28(3): 747-759. DOI: https://doi.org/10.1007/s11771-021-4642-9.

J. Cent. South Univ. (2021) 28: 747-759

DOI: https://doi.org/10.1007/s11771-021-4642-9

LIU Ling(刘玲)1, CHAI Guo-hua(柴国华)2, QU Zhong(瞿中)2

1. College of Mobile Telecommunications of Chongqing University of Posts and Telecommunications, Chongqing 401520, China;

2. College of Computer Science and Technology, Chongqing University of Posts and Telecommunications, Chongqing 400065, China

Central South University Press and Springer-Verlag GmbH Germany, part of Springer Nature 2021

Central South University Press and Springer-Verlag GmbH Germany, part of Springer Nature 2021

Abstract: Visual background extraction algorithm (ViBe) uses the first frame image to initialize the background model, which can easily introduce the “ghost”. Because ViBe uses the fixed segmentation threshold to achieve the foreground and background segmentation, the detection results in many false detections for the highly dynamic background. To solve these problems, an improved ghost suppression and adaptive Visual Background Extraction algorithm is proposed in this paper. Firstly, with the pixel’s temporal and spatial information, the historical pixels of a certain combination are used to initialize the background model in the odd frames of the video sequence. Secondly, the background sample set combined with the neighborhood pixels are used to determine a complex degree of the background, to acquire the adaptive segmentation threshold. Thirdly, the update rate is adjusted based on the complexity of the background. Finally, the detected result goes through a post-processing to achieve better detection results. The experimental results show that the improved algorithm will not only quickly suppress the “ghost”, but also have a better detection in a complex dynamic background.

Key words: moving target detection; ghost suppression; adaptive visual background extraction

Cite this article as: LIU Ling, CHAI Guo-hua, QU Zhong. Moving target detection based on improved ghost suppression and adaptive visual background extraction [J]. Journal of Central South University, 2021, 28(3): 747-759. DOI: https://doi.org/10.1007/s11771-021-4642-9.

1 Introduction

Moving object detection is a research hotspot in the field of computer vision, which plays an important role in intelligent monitoring [1-3]. Recently, the influence of complex scenes, shadows and light changes has brought difficulties to accurate detection.

To solve these problems, ViBe algorithm is widely used because of its less computational complexity and good real-time performance [4]. In this paper, the commonly used moving target extraction algorithm is studied, and the ViBe algorithm of visual background extraction in background subtraction method is analyzed. There are three steps for moving target extraction: background modelling, foreground extraction and background model updating. But the algorithm also has some disadvantages. For example, when moving targets exist in the first frame, it easily introduces a “ghost”. Furthermore, the algorithm requires multiple frames to completely eliminate the “ghost”. In addition, ViBe algorithm uses a fixed threshold to achieve the foreground and background segmentation, which cannot be adapted to the situation of dynamic background.

ViBe algorithm is improved to achieve better detections. Our main contributions can be summarized as follows:

1) The historical pixels of the odd frames are used to initialize the background model, to suppress the “ghost”. If the initialization does not completely eliminate the “ghost”, we utilize the spatial consistency principle of neighborhood pixels to rapidly eliminate the “ghost”.

2) The background sample information of the pixel and its neighborhood information are combined to determine the complexity of the background, which can achieve the self-adaptive segmentation threshold and self-adaptive update rate.

3) A post-processing operation is done, which fills the holes in detection and eliminates some isolated noise pixels.

The remainder of this paper is arranged as follows. Section 2 presents an overview of the ViBe algorithm. Section 3 describes the improved method in detail. Section 4 presents an experimental results and analysis of the improved method. Finally, Section 5 concludes the conclusion of this paper and future research directions.

2 Related work

The commonly used methods of moving target detection are inter-frame difference method [5, 6], optical flow method [7], and background subtraction method [8-10]. And at present, background subtraction methods are widely used, which can be divided into the Gaussian mixed model [11], CodeBook model [12, 13] and ViBe algorithm [14, 15]. ViBe algorithm has less computational complexity and good real-time performance [4]. Based on ViBe algorithm, many scholars have made improvements. LU et al [16] proposed that the background model was initialized by using diamond-shaped neighborhood, to suppress the “ghost”. PAK et al [17] used the idea of an inter-frame difference to determine the “ghost” pixel, which accelerated the “ghost” elimination. CHANG et al [18] proposed that the current segmentation threshold was obtained by calculating the mean value of the minimum distance of the current pixel’s background sample values. FAN et al [19] used a confidence relationship model to detect the moving targets in complex environments.

2.1 Background model initialization

ViBe algorithm assumes that neighborhood pixels have similar spatial distributions. So, for each pixel, its neighborhood pixels are randomly selected to establish background sample set of the current pixel, and the size of sample set is N. The background model is consisted with each pixel’s background sample set. The background sample set of current pixel x is defined as:

(1)

(1)

where M(x) is the background sample set of current pixel x; Nei(x) is the eight neighborhood of the pixel x; yi(x) is a pixel that is randomly selected from Nei(x); i is the i-th background sample value.

2.2 Foreground and background segmentation

The foreground and background are segmented from the second frame. ViBe algorithm calculates the color Euclidean distance, which is the pixel difference of current pixel and its background sample set. Then ViBe algorithm counts the number of sample points with Euclidean distance d less than the fixed threshold R. Later, it determines whether the d is greater than the given threshold dmin or not. If d is larger than or equal to dmin, the current pixel is classified as a background pixel (BG); otherwise, it is treated as a foreground pixel (FG).

(2)

(2)

where v(x) is the pixel value of the current pixel x; dist(*) is the Euclidean distance of current pixel x and its background sample set; d(*) is the number of sample points terms that satisfy the condition; R is the segmentation threshold (R is token 20 in ViBe); dmin is the minimum number that satisfies the match (dmin is token 2 in ViBe).

2.3 Background model update

The ViBe algorithm used a conservative way to update background model. It is only updated when the pixel is determined as a background pixel. The updated rate is fixed, according to a certain probability 1/f (f is the fixed time sampling factor) function. The current pixel value replaces a sample value that is randomly selected from current pixel’s background sample set. And in the same way, the current pixel’s neighborhood pixel is updated in the probability 1/f, and the updated neighborhood pixel is randomly selected.

3 Improved ViBe detection algorithm

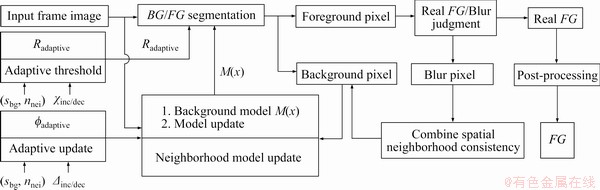

The background model initialization, global fixed segmentation threshold and background model update of ViBe algorithm are improved. The overall scheme of the improved algorithm is shown in Figure 1. In the background model initialization, the historical pixels of the odd frames in the previous m frames are combined to construct the background sample set for each pixel according to the way of 1: 1: 2: 2: 3: 3: 3: 5. The sample set information of the current pixel is combined with the pixel’s neighborhood information, to determine the complexity of the background. Then for the current pixel, the adaptive segmentation threshold and adaptive update rate are obtained by using the complexity of the background. Finally, the detection result does a post-processing, which is described in Section 3.4 specifically.

3.1 Background model initialization

3.1.1 Change the background model initialization

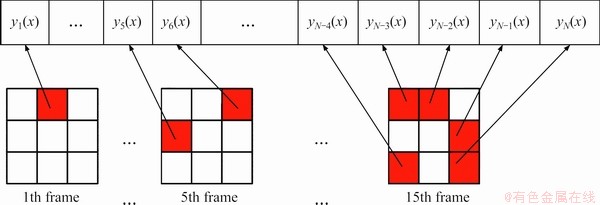

For ViBe algorithm, when moving targets exist in the first frame, the region with moving targets will not produce a reliable background model. From the second frame, the real background of the previous region shows, so the real background does not match the background model. The real background points are detected as foreground points, which are called the “ghost”. In this paper, for each pixel, the improved algorithm selects the odd frames’ pixels in the previous m frames image of the video sequence to initialize the background sample set. And for each pixel, this method uses (m+1)/2 frames to select N background sample points. The N sample points are combined with the selected video frames in accordance with 1: 1: 2: 2: 3: 3: 3: 5. For example, for the current pixel x the method randomly selects one sample point from the neighborhood of x in the first frame. Then, the method randomly selects one sample point from the neighborhood of x in the third frame. Five sample points are selected from the neighborhood of x in the fifteen frames.

Figure 2 shows the improved background model initialization. If there is a moving target in the first frame, by going back with the video frame, the background of the moving target’s region becomes more accurate. So, the improved initialization can establish a relatively reliable background model and reduce some noises.

Figure 1 Overall scheme of improved algorithm

Figure 2 Selection of background sample by improved initialization

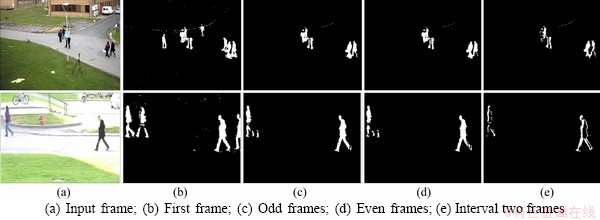

For the “ghost” suppression, the sooner the better. If the algorithm suppresses the “ghost” through the multi-frames initialization background model, the best strategy would be that the video frames of initial part are utilized less and can suppress the “ghost”. In addition, it is possible to detect more frames when the moving target does not exist in the first frame. Some algorithms select one sample point from each frame of initialization. They need at least N frames to initialize the background model. This is the reason why the historical pixels of the multi-frames are assembled in the form of the certain proportion to initialize the background model. And for multi-frames initialization, the odd frames, even frames and the interval two frames are compared in this paper. The experimental results are shown in Figure 3.Figure 3(a) comes from PETS 2009 dataset and the Change detection dataset (top to bottom).

By comparing the first frame initialization of with the odd frames initialization, it shows that the improved initialization has positive effects on the “ghost” suppression. The ViBe algorithm eliminates the “ghost” by updating the background model, and it usually needs more than 100 frames to achieve complete elimination. But we use the odd frames of the previous m frames in the video sequence to initialize the background model, which basically can suppress the “ghost” after the initialization and reduce some noises as shown in Figure 3(c).

From Figures 3(c) and (d), the even frames initialization has more detection deletions. By comparing Figure 3(e) with Figure 3(c), the effects of odd frames initialization are better than the effects of interval two frames initialization. In addition, for the requisite number of frames in initialization phase for the odd frames is 1 frame less than the even frames initialization, and 6 frames less than the interval two frames. The requisite number of frames in initialization phase is not same as the requisite number of frames in initialization background model. As for the requisite number of frames in initialization background model, the three initializations are same, all of them are (m+1)/2 frames.

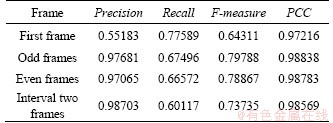

Table 1 shows the average performance comparison of different initialization methods in above-mentioned videos’ 50 frames after initialization phase. It can be seen that the odd frames initialization outperforms the first frame initialization of ViBe in terms of Precision, F-measure and PCC. In addition, F-measure and PCC of the odd frames initialization are better than the even frames initialization and interval two frames initialization.

In summary, there are three reasons of selecting odd frames initialization: 1) Odd frames initialization can suppress the “ghost” when the first frame has moving targets; 2) When first frame has moving targets, odd frames initialization is better than even frames and interval two frames; 3) The initialization phase of odd frames initialization costs less frames than even frames initialization and interval two frames initialization.

3.1.2 Combine neighborhood pixel spatial consistency principle

In order to solve the existing “ghost” problem (we call it blur) in the PETS 2006 and pedestrians test videos, we combined the neighborhood pixel spatial consistency principle to check the suspicious pixels. It can rapidly eliminate the blur. Figures 4(a) and (g) are from the PETS 2006, and Figures 4(d) and (j) are from pedestrian video. They all come from the Change detection dataset. From Figures 4(c) and (f), there are partial blurs (marked with red circles) after the improved initialization, which require more than 100 frames to completely eliminate by using ViBe algorithm.

Figure 3 Comparison of different initialization methods:

Table 1 Performance of different initialization methods

According to the spatial consistency principle of neighborhood pixels, if the current suspicious “ghost” pixel with its neighborhood pixels’ background sample set can be a good match, the suspicious pixel can be seen as the “ghost” pixel. The process of neighborhood pixels is from left to right and from top to bottom. In Figure 5, for the pixel x, its neighborhood pixels k1, k2, k3, k4 are determined, but u1, u2, u3, u4 are not processed. So the background sample sets of k1, k2, k3, k4 are reliable. If x is determined a suspicious “ghost” pixel, this indicates that its background sample set may be unreliable. In this paper, the matching degree of x with the background sample set of k1, k2, k3, k4 is counted. If it satisfies dα or more, we will determine x as the “ghost” pixel and update the background model in time; otherwise, x is the foreground pixel. dα has a value of 2.

Figure 4 Problem of blurs and elimination:

Figure 5 Neighborhood pixels of x

In order to reduce the amount of computation, we do not judge all foreground pixels, but rather uses the statistical method. If a pixel of continuous Ng frames is determined as a foreground pixel, it is regarded as a suspicious “ghost” pixel. We use above method to further determine whether the pixel is the “ghost” or not.

From Figures 4(h) and (k), it can be seen that there is still some blurs after more than 20 frames of initialization only by using the improved initialization. And using the ViBe algorithm’s updated strategy requires nearly 80 frames to completely eliminate the blurs. But by combining with the neighborhood pixel spatial consistency principle can completely eliminate the blurs after 21 frames of improved initialization.

3.2 Adaptive threshold segmentation strategy

ViBe algorithm uses the global fixed threshold to segment the foreground and background, which has good effects for the static background. But in the case of a dynamic complex background, it cannot achieve correct segmentation. If the fixed threshold was used, the background of the high dynamic region would be detected as foreground pixels, which results in false detections. It should not be fixed for all pixels because they may be in different complex backgrounds. Instead, it should be a pixel-wise variable [21]. So, for the dynamic background, the segmentation threshold should be raised so that the background pixels of the high dynamic region are not detected as foreground pixels. And for the simple static background (that is the low dynamic region), the segmentation threshold should be appropriately reduced to detect the foreground of minor changes.

To get the adaptive segmentation threshold, we must determine the background complexity of the current pixel, then adaptively get a segmentation threshold for the pixel. In ViBe [22], the standard deviation of the background sample set is calculated to determine the background complexity of the current pixel. In this paper, we combine the background sample set of the current pixel and the neighborhood pixels of the current pixel to determine the background complexity. The sum of the mean deviation of the background sample set and the sum of mean deviation in the Sw×Sh neighborhoods are calculated. Then, the above calculation results are used to reflect the complexity of the current background, so as to realize the adaptive segmentation threshold. The steps are as follows:

1) Calculate the mean value mbg of the background sample set of the current pixel x;

(3)

(3)

2) Calculate the sum sbg of the difference between mbg and the background sample set of x;

(4)

(4)

3) Calculate the mean value mnei of the Sw×Sh neighborhood pixels of the current pixel x. The pj(x) is the j-th neighborhood pixel;

(5)

(5)

4) Calculate the sum nnei of the difference between mnei and the neighborhood pixels in the Sw×Sh;

(6)

(6)

5) Obtain the background complexity and adaptive segmentation threshold Radaptive.

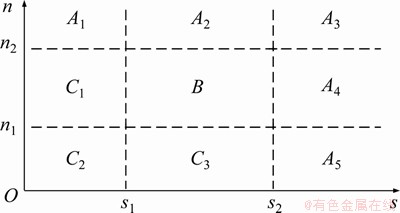

Since the sum of the mean deviation of the background sample set and the sum of the mean deviation of the neighborhood pixels are calculated respectively, we will obtain two irrelevant data values sbg and nnei. Both sbg and nnei can reflect the background complexity. So, the background sample information and neighborhood information have their own scopes to reflect the background complexity. For the background sample information, when the sbg>s2, it can be the high dynamic background; when sbg it can be the normal dynamic background. It also applies to nnei, for neighborhood information. But when only considering one of them, sometimes the background complexity cannot be produced accurately. In Figure 6, we put them together into the two-dimensional space (The two data values are irrelevant, this is just for better description). So we can treat the sbg and nnei as two-dimensional random variable (sbg, nnei).

it can be the normal dynamic background. It also applies to nnei, for neighborhood information. But when only considering one of them, sometimes the background complexity cannot be produced accurately. In Figure 6, we put them together into the two-dimensional space (The two data values are irrelevant, this is just for better description). So we can treat the sbg and nnei as two-dimensional random variable (sbg, nnei).

In Figure 6, the two-dimensional space is divided into nine areas by using the scopes of two data.

Figure 6 Background complexity judgment area division

For example, for the A1, when only considering the of the background sample information, the pixel’s background is a low dynamic background. But it is determined to be a high dynamic background for the neighborhood information. In this situation, we still regard the background of the current pixel as high dynamic background. Therefore, the nine areas are classified as three regions, which are the A, B and C. A1, …, A5 are classified as A. It also applies to B and C. For the comprehensive judgment of the background complexity, A is the high dynamic background; B is the normal dynamic background; and C is the low dynamic background. And n1, n2, s1 and s2 are token empirical values by a large number of experiments. In this paper, we define n1=15, n2=40, s1=25, s2=80. For different background complexity, we use adaptive the factor to get the adaptive segmentation threshold Radaptive. The adaptive formula is:

(7)

(7)

where χinc/dec is the fixed parameter; (sbg, nnei) represents the sum of the mean deviation of the background sample set and the sum of the mean deviation of the neighborhood pixels, which form a two-dimensional random variable group; A, B and C are two-dimensional threshold regions that respectively reflects different background complexity; R is the segmentation threshold of the previous frame; Radaptive is the adaptive segmentation threshold for the pixel at the current frame. For different background complexity, the segmentation threshold is gradually self-increasing or self-decreasing to realize the adaptive change. But the adaptive threshold Radaptive should not be too large or too small. If too large, it will cause the missing of foreground detections, and too small it will appear a lot of false detections. Through many experiments, the Radaptive should range from 18 to 45.

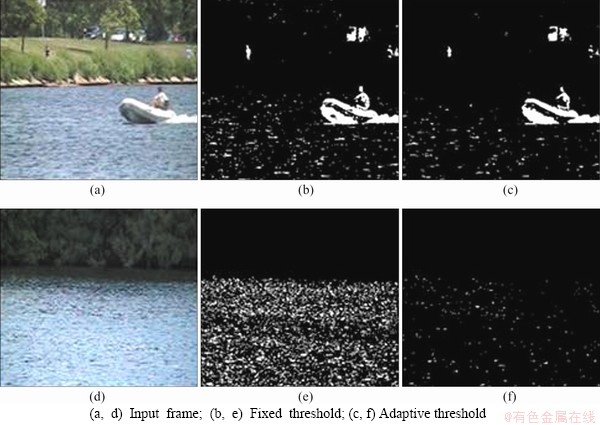

The detection effects of the adaptive segmentation threshold are shown in Figure 7, Figures 7(a) and (d) are from the Change detection dataset.

In Figures 7(b) and (e), since the background is the dynamic wave, the ViBe algorithm of the fixed segmentation threshold shows a large number of false foreground points. In Figures 7(c) and (f), the adaptive segmentation threshold of the improved algorithm reduces some false detections and improves the accuracy of detection. But there is a slight deficiency compared to the ground-truth in Figure 7(c).

3.3 Background model update

The ViBe algorithm updates the background model by using the fixed update rate, which is not suitable for the complex dynamic background and illumination change. It increases the probability of false detections for later frames. We make the following improvements.

Figure 7 Comparison of fixed threshold and the adaptive threshold:

1) For the different background complexity to achieve the adaptive update rate. When the background is the high dynamic background, the probability of false detection will become high; therefore, the update rate should be slowed down. When the background is the low dynamic background, the update rate should be increased. The update rate is the reciprocal of the time sampling factor, so when increasing the time sampling factor, the update rate is correspondingly slowed down [22-25]. We use the adaptive time sampling factor to achieve the adaptive update rate. The adaptive time sampling factor is shown in Eq. (8):

(8)

(8)

where f is the time sampling factor of the ViBe algorithm; fadaptive is the adaptive time sampling factor; △inc/dec is the parameter to control time sampling factor.

2) When the current pixel is detected as the background pixel, the ViBe algorithm randomly selects a background sample pixel to update. Sometimes, the original correct sample pixel may be replaced, it will increase the false detections for the next video frames. Therefore, the paper uses the “random+fixed” update strategy to achieve the background model update. The random strategy is the same as the ViBe. But the fixed strategy is to replace the sample pixel that is the largest Euclidean distance with the current pixel. This can increase the accuracy of the background model update.

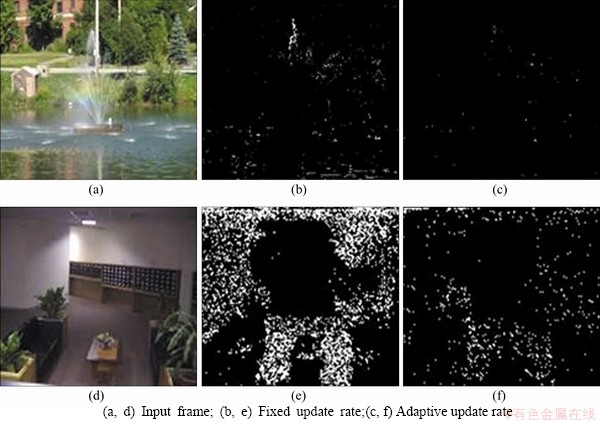

The comparison between the improved background model update and the ViBe algorithm is shown in Figure 8. Figure 8(a) comes from the Change detection dataset, and Figure 8(d) comes from the I2R dataset.

Figure 8(a) shows the complex dynamic background. The fixed update rate will increase the probability of false detection, but adaptive update can have a good effect on the subsequent detection. In Figure 8(e), due to sudden illumination change, it presents a multitude of false detections. For the next detection, adaptive update can quickly integrate false foreground pixels into the background.

Figure 8 Comparison of fixed update rate and adaptive update rate:

3.4 Post-processing of detected results

3.4.1 Perform a certain hole filling process

The detection results of ViBe algorithm and the improved algorithm may have some holes for the foreground. In this paper, we fill some holes for the detection results. The basic idea is to find all the contours in the detected result and calculate the contours’ area by the function cvContourArea of OpenCV library. If the area of the contours is less than a certain value (we use 300 by lots of experiments), then the contours are filled. To a certain extent, this has a good effect.

3.4.2 Eliminate isolated noises

In the detection results, some noises often exist in isolated form. For this case, we make a judgment in the eight neighborhood of the isolated noises to eliminate the isolated noises. The basic idea is to determine whether the eight neighborhood pixels of the noise are background pixel. Then we count the number of background pixels in the eight neighborhood of the noise. And if the number is greater than the given threshold dk, the noises would be treated as background pixels, otherwise it would be the foreground pixels. We select the dk=6. This method of eliminating isolated noises can preserve the edge of the foreground. The experimental results show that there is a good effect.

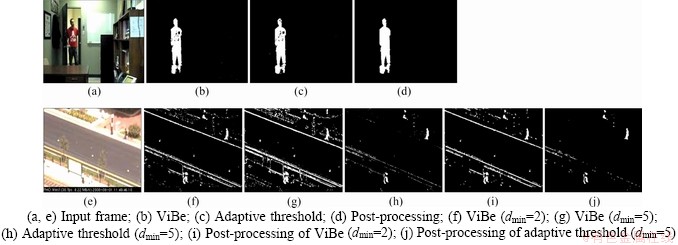

The effect of the improved algorithm on the post-processing is shown in Figure 9. Video sequences in Figures 9(a) and (e) are from the Changedetection dataset.

In the office test video, there are faint changes in light. The adaptive threshold can suppress some noises, but the detection result has some holes. From Figure 9(d), the post-processing can fill some holes and eliminate the isolated noises. In the second test video, due to the camera jitter, it belongs to high dynamic background. The ViBe algorithm of dmin=2 and dmin=5 and dmin adaptive segmentation threshold are compared (Notes: for dmin, it is greater, the greater probability the pixel is detected as the foreground. So it has more false foreground in Figure 9(g) than that of Figure 9(f)). It can be seen that the adaptive threshold has a good effect. From Figures 9(i) and (j), the post-processing has positive effects.

4 Experimental results and analysis

4.1 Experimental parameters and evaluation index

The experiment is done in C++ with Intel Core i7 processor for CPU and 8G RAM. The parameters of the improved algorithm are as follows: the requisite number of frames in initialization phase m=15; background sample set N=20; foreground and background segmentation initial threshold R=20; the minimum number of matches dmin=5; time sampling factor f=16; controlling the ViBe algorithm the adaptive segmentation threshold χinc/dec=0.05; controlling the adaptive time sampling factor △inc/dec=8; Sw×Sh is 3×3 neighborhood of the current pixel.

Figure 9 Comparison of ViBe with post-processing of detection:

In this paper, the basic metrics [26] used to evaluate detection results are mainly selected as Pre (precision), Re (recall), FM (F-measure), FPR (false positive rate), FNR (false negative rate), PWC (percentage of wrong classifications), PCC (percentage of correct classifications), Sp (specificity), PM (performance measuring).

(9)

(9)

(10)

(10)

(11)

(11)

(12)

(12)

(13)

(13)

(14)

(14)

(15)

(15)

(16)

(16)

(17)

(17)

TP (True Positive): total number of pixels classified as foreground correctly;

FP (False Positive): total number of pixels classified as foreground by mistake;

FN (False Negative): total number of pixels classified as background by mistake;

TN (True Negative): total number of pixels classified as background correctly.

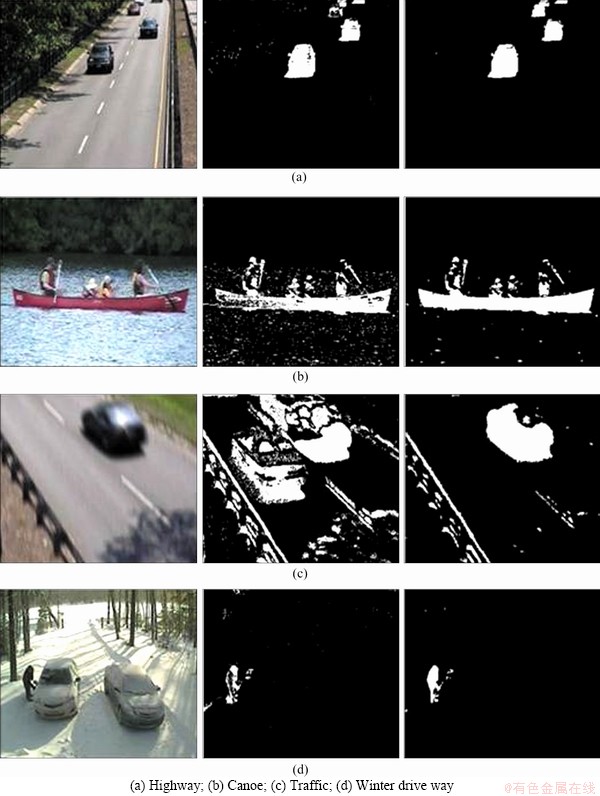

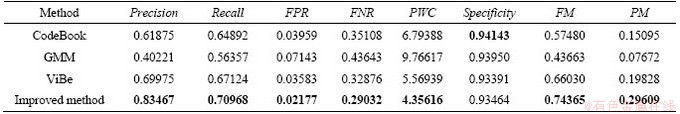

We calculated the average of each performance of the four algorithms in the four scenes, as shown in Table 2. It shows that the improved method is superior to the ViBe algorithm. And the comprehensive evaluating indicators FM and PM compared to the ViBe algorithm improved 8.3% and 9.8%, respectively.

5 Conclusions

To solve the problems of ViBe algorithm, we propose an improved algorithm. Firstly, odd frames historical pixels are used to initialize the background model and combine with the spatial consistency principle of neighborhood pixels, which can quickly solve the “ghost” problem. In addition, in order to generate adaptive segmentation threshold and adaptive update rate, the improved method combines background samples with neighborhood pixel information to judge the background complexity. Finally, the results of the previous detection do a post-processing. To a certain extent, the foreground holes are filled and the isolated noises are eliminated. The experimental results demonstrate that the improved method enhances the robustness against the dynamic complex background and can quickly suppress the “ghost”.

Figure 10 Comparison of ViBe and improved algorithm:

Table 2 Average value of each performance in 4 algorithms

Based on the ideas and methods of this paper, we will research shadow elimination in the future.

Contributors

The overarching research goals were developed by LIU Ling, CHAI Guo-hua and QU Zhong. CHAI Guo-hua and QU Zhong determined the dataset of the experiment. LIU Ling and CHAI Guo-hua established the models, and completed experimental work. LIU Ling, CHAI Guo-hua, and QU Zhong completed experimental data analysis. The initial draft of the manuscript was written by LIU Ling and CHAI Guo-hua. All authors replied to reviewers’ comments and revised the final version.

Conflict of interest

LIU Ling, CHAI Guo-hua, and QU Zhong declare that they have no conflict of interest.

References

[1] SENGAR S S, MUKHOPADHYAY S. Moving object detection based on frame difference and W4 [J]. Signal, Image and Video Processing, 2017, 11(7): 1357-1364. DOI: 10.1007/s11760-017-1093-8.

[2] YU Xiao-han, CHEN Xiao-long, HUANG Yong, ZHANG Lin, GUAN Jian, HE You. Radar moving target detection in clutter background via adaptive dual-threshold sparse Fourier transform [J]. IEEE Access, 2019, 7: 58200-58211. DOI: 10.1109/ACCESS. 2019.2914232.

[3] LI Xiao-long, SUN Zhi, ZHANG Tian-xian, YI Wei, CUI Guo-long, KONG Ling-jiang. WRFRFT-based coherent detection and parameter estimation of radar moving target with unknown entry/departure time [J]. Signal Processing, 2020, 166: 107228. DOI: 10.1016/j.sigpro. 2019.07.021.

[4] ZHOU Yuan, MAO Ai-ling, HUO Shu-wei, LEI Jian-jun, KUNG Sun-yuan. Salient object detection via fuzzy theory and object-level enhancement [J]. IEEE Transactions on Multimedia, 2019, 21(1): 74-85. DOI: 10.1109/TMM. 2018.2845667.

[5] BAE G, CHO S I, KANG S J, KIM Y H. Dual-dissimilarity measure-based statistical video cut detection [J]. Journal of Real-Time Image Processing, 2019, 16(6): 1987-1997. DOI: 10.1007/s11554-017-0696-1.

[6] MAHRAZ M A, RIFFI J, TAIRI H. High accuracy optical flow estimation based on PDE decomposition [J]. Signal, Image and Video Processing, 2015, 9(6): 1409-1418. DOI: 10.1007/s11760-013-0594-3.

[7] YANG Yong-peng, YANG Zhen-zhen, LI Jian-lin, FAN Lu. Foreground-background separation via generalized nuclear norm and structured sparse norm based low-rank and sparse decomposition [J]. IEEE Access, 2020, 8: 84217-84229. DOI: 10.1109/ACCESS.2020.2992132.

[8] ZHAO Chen-qiu, SAIN Aneeshan, QU Ying, GE Yong-xin, HU Hai-bo. Background subtraction based on integration of alternative cues in freely moving camera [J]. IEEE Transactions on Circuits and Systems for Video Technology, 2019, 29(7): 1933-1945. DOI: 10.1109/TCSVT.2018.2854 273.

[9] CHEN Yu-qiu, SUN Zhan-li, LAM K M. An effective subsuperpixel-based approach for background subtraction [J]. IEEE Transactions on Industrial Electronics, 2020, 67(1): 601-609. DOI: 10.1109/TIE.2019.2893824.

[10] LE M Y, ANGELINI E D, OLIVO-MARIN J C. An unbiased risk estimator for image denoising in the presence of mixed Poisson-Gaussian noise [J]. IEEE Transactions on Image Processing, 2014, 23(6): 2750-2750. DOI: 10.1109/TIP. 2014.2300821.

[11] SHAH M, DENG J D, WOODFORD B J. A self-adaptive codeBook (SACB) model for real-time background subtraction [J]. Image and Vision Computing, 2015, 38: 52-64. DOI: 10.1016/j.imavis.2015.02.001.

[12] ZHU Bing, TIAN Lian-fang, DU Qi-liang, YU Lu-bin, SHI Li-xin. An improved background modeling algorithm based on the codebook model [C]// Chinese Control and Decision Conference(CCDC). Chongqing, 2017: 3998-4003.

[13] GAO Jun, ZHU Hong-hui. Moving object detection for video surveillance based on improved ViBe [C]// Chinese Control and Decision Conference(CCDC). Yinchuan, 2016: 6259-6263.

[14] LIU Zhong-kang, AN Dao-xiang, HUANG Xiao-tao. Moving target shadow detection and global background reconstruction for video SAR based on single-frame imagery [J]. IEEE Access, 2019, 7: 42418-42425. DOI: 10.1109/ ACCESS.2019.2907146.

[15] CONTE D, FOGGIA P, PERCANNELLA G, TUFANO F, VENTO M. An experimental evaluation of foreground detection algorithms in real scenes [J]. EURASIP Journal on Advances in Signal Processing, 2010: 373941. DOI: 10.1155/2010/ 373941.

[16] LU Jia-qing, LIU Li-cheng, HAO Lu-guo, ZHANG Wen-zhong. Adaptive moving object extraction algorithm based on visual background extractor [J]. Journal of Application, 2015, 35(7): 2029-2032. (in Chinese)

[17] CHUN-HYOK P, ZHAO Hai, ZHU Hong-bo, PAN Yi-lin. A novel motion detection approach based on the improved ViBe algorithm [C]// Chinese Control and Decision Conference. Yinchuan, 2016: 7081-7086.

[18] CHANG Le, LIU Zheng-hua, REN Yan. Improved adaptive ViBe and the application for segmentation of complex background [J]. Mathematical Problems in Engineering, 2016: 3835952. DOI: 10.1155/2016/3835952.

[19] FAN Zhi-hui, WANG Hui. Robust moving object detection based on spatio-temporal confidence relationship [J]. Electronics Letters, 2016, 52(10): 825-827. DOI: 10.1049/el.2015.4544.

[20] YIN Bao-cai, ZHANG Jing, WANG Zeng-fu. Background segmentation of dynamic scenes based on dual model [J]. IET Computer Vision, 2014, 8(6): 545-555. DOI: 10.1049/iet-cvi.2013.0319.

[21] van DROOGENBROECK M, PAQUOT O. Background subtraction: Experiments and improvements for ViBe [C]// 2012 IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops (CVPR Workshops). RI: Providence, 2012: 32-37.

[22] QU Zhong, HUANG Xiao-ling. The foreground detection algorithm combined the temporal-spatial information and adaptive visual background extraction [J]. Imaging Science Journal, 2017, 65(1): 49-61. DOI: 10.1080/13682199.2016. 1258509.

[23] QU Zhong, HUANG Xu, LIU Ling. An improved algorithm of multi-exposure image fusion by detail enhancement [J]. Multimedia Systems, 2020, 27(1): 33-44. DOI: 10.1007/ s00530-020-00691-4.

[24] QU Zhong, LI Jun, BAO Kang-hua, SI Zhi-chao. An unordered image stitching method based on binary tree and estimated overlapping area [J]. IEEE Transactions on Image Processing, 2020, 29: 6734-6744. DOI: 10.1109/TIP. 2020.2993134.

[25] QU Zhong, CHEN Si-qi, LIU Yu-qin, LIU Ling. Linear seam elimination of tunnel crack images based on statistical specific pixels ratio and adaptive fragmented segmentation [J]. IEEE Transactions on Intelligent Transportation Systems, 2020, 21(9): 3599-3607. DOI: 10.1109/TITS.2019.2929483.

[26] ZHANG Rong-guo, LIU Xiao-jun, HU Jing, CHANG Kai, LIU Kun. A fast method for moving object detection in video surveillance image [J]. Signal, Image and Video Processing, 2017, 11(5): 841-848. DOI: 10.1007/s11760-016-1030-2.

(Edited by ZHENG Yu-tong)

中文导读

基于改进的鬼影抑制和自适应视觉背景提取的运动目标检测

摘要:视觉背景提取算法(ViBe)利用第一帧图像对背景模型进行初始化,很容易产生鬼影现象。由于ViBe使用固定的分割阈值来实现前景和背景的分割,对于高度动态的背景,ViBe的检测会产生很多的误检测。针对这些问题,本文提出了一种改进的“鬼影”抑制和自适应视觉背景提取算法。首先,利用像素的时间与空间信息,在视频序列的奇数帧中,利用一定组合的历史像素对背景模型进行初始化。其次,利用背景样本集与邻域像素相结合确定背景的复杂度,得到自适应的分割阈值。然后,根据背景的复杂程度调整更新率。最后,对检测结果进行后处理,以达到更好的检测效果。结果表明,改进后的算法不仅能快速抑制鬼影,而且在复杂的动态背景下也能获得较好的检测效果。

关键词:运动目标检测;鬼影抑制;自适应视觉背景提取